![]()

I have written about Jevons Paradox twice now, once through the history of the semiconductor industry, and once as a broader examination of what happens when the cost of a critical economic input collapses. The pattern is consistent: demand expands to overwhelm the savings. Coal. Transistors. Bandwidth. Lighting.

Those pieces looked at the pattern itself. This one is different. I want to run a thought experiment forward, not backward.

I've also spent a lot of time on this site looking backward at computing history, watching Stewart Cheifet walk viewers through the early personal computer revolution on The Computer Chronicles, examining how word processing went from a curiosity to a necessity in a single decade, tracing George Morrow's role in making personal computing real, and following CP/M's arc from operating system of the future to historical footnote. I've run CP/M on physical RetroShield hardware, explored the Motorola 68000 that powered a generation of machines, and dug into how Infocom turned text adventures into a business at a time when 64K of RAM was generous. That immersion in where computing came from is exactly what makes the forward question so vivid, because at every stage, the people living through the transition couldn't see what was coming next. The engineers building CP/M didn't anticipate DOS. The engineers building DOS didn't anticipate the web. The engineers building the web didn't anticipate the iPhone. The pattern is always the same: cheaper compute enables things that were unimaginable at the prior cost.

The question isn't "will AI destroy jobs?" or "is the doom scenario wrong?" The question is: what becomes possible when thinking gets cheap?

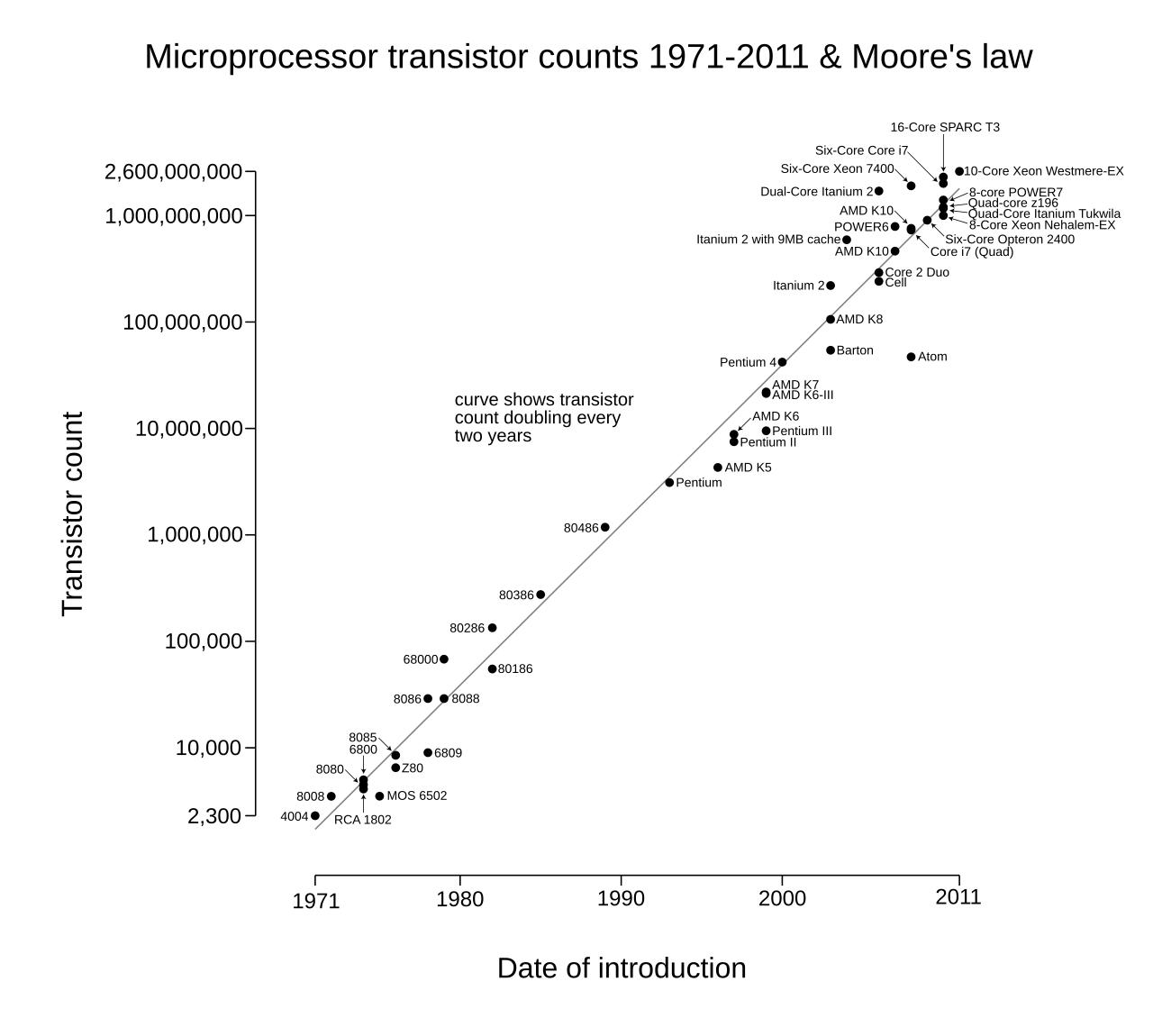

Because AI compute is following a cost curve that looks remarkably like the early decades of Moore's Law. And if that continues (if the cost per unit of machine intelligence drops by an order of magnitude every few years) the consequences extend far beyond making today's chatbots cheaper to run.

The Cost Curve

Moore's Law, in its original formulation, described the doubling of transistors per integrated circuit roughly every two years. But the economic consequence that mattered wasn't transistor density; it was cost per unit of compute. From the 1960s through the 2010s, the cost per FLOP declined at a compound rate that delivered roughly a 10x improvement every four to five years. A computation that cost \$1 million in 1975 cost \$1 by 2010. That decline didn't just make existing applications cheaper. It created entirely new categories of computing that were inconceivable at the prior cost structure.

AI inference costs are now following a similar trajectory, but faster. OpenAI's text-davinci-003, released in late 2022, cost \$20 per million tokens. GPT-4o mini, released in mid-2024, delivers substantially better performance at \$0.15 per million input tokens, a 99% cost reduction in under two years. Claude, Gemini, and open-source models have followed similar curves. DeepSeek entered the market in early 2025 with pricing that undercut Western frontier models by roughly 90%, compressing the timeline further through competitive pressure.

The GPU hardware underneath these models is on its own Moore's Law trajectory. GPU price-performance in FLOP/s per dollar doubles approximately every 2.5 years for ML-class hardware. Architectural improvements in transformers, mixture-of-experts routing, quantization, speculative decoding, and distillation compound on top of the hardware gains. The result is a cost curve where the effective price of a unit of machine reasoning is falling faster than the price of a transistor did during the semiconductor industry's most explosive growth phase.

This matters because we know, empirically, what happens when the cost of a foundational input follows an exponential decline. We have sixty years of data on it. The compute industry went from a few thousand mainframes serving governments and large corporations to billions of devices in every pocket, every appliance, every traffic light. Total spending on computing didn't shrink as costs fell; it expanded by orders of magnitude, because each 10x cost reduction unlocked categories of use that didn't exist at the prior price point.

The thought experiment is straightforward: apply that pattern to intelligence itself.

Today's Price Points Create Today's Use Cases

At current pricing (roughly \$3 per million input tokens for a frontier model like Claude Sonnet), AI is economically viable for a specific class of applications. Customer support automation. Code assistance. Document summarization. Marketing copy. Translation. These are the use cases where the value generated per token comfortably exceeds the cost per token, and where the interaction pattern involves relatively short exchanges.

But there are vast categories of potential use where current pricing makes the math uncomfortable or impossible. Consider:

Continuous monitoring and analysis. A financial analyst who wants an AI to continuously watch SEC filings, earnings calls, patent applications, and news feeds across 500 companies (analyzing each document in full, cross-referencing against historical patterns, and generating alerts) would consume billions of tokens per month. At current prices, this costs tens of thousands of dollars monthly. At 100x cheaper, it costs the price of a SaaS subscription.

Full-codebase reasoning. This one is already arriving. Anthropic's Claude Opus 4.6, working through Claude Code, can operate at the repository level, reading files, understanding architecture, running tests, and making changes across an entire codebase in a single session. I've used it to build a high-performance Rust-based ballistics engine and to develop Lattice, an entire programming language with a bytecode VM compiler, projects where the AI wasn't autocompleting fragments but reasoning across thousands of lines of interconnected code, tracking type systems, managing compiler passes, and understanding how changes in one module ripple through the rest. The constraint today isn't capability; it's cost. These sessions consume large volumes of tokens, which means they're viable for serious engineering work but not yet cheap enough to run continuously on every commit, every pull request, every deployment. At 100x cheaper, that changes. At 1,000x cheaper, every codebase has an always-on collaborator that has read everything and forgets nothing.

Personalized education at scale. A truly personalized AI tutor that adapts to a student's learning style, tracks their understanding across subjects, reviews their homework in detail, explains mistakes with patience, and adjusts its teaching strategy over months, this requires sustained, high-volume token consumption per student. Multiply by millions of students and the current cost structure breaks. At 100x cheaper, it's viable for a school district. At 1,000x cheaper, it's viable for an individual family.

Preventive medicine. Analyzing a patient's complete medical history, genetic data, lifestyle information, lab results, and the current research literature to generate genuinely personalized health recommendations (not the generic advice a five-minute doctor's visit produces, but the kind of comprehensive analysis that currently only concierge medicine patients paying \$10,000+ per year receive). At current token prices, this is prohibitively expensive for routine use. At 100x cheaper, it could be embedded in every annual checkup.

Ambient intelligence. The concept of AI that runs continuously in the background of your life (understanding your calendar, email, documents, and goals, proactively surfacing relevant information, drafting responses, scheduling meetings, flagging conflicts) requires sustained inference at volumes that would cost hundreds of dollars per day at current prices. At 1,000x cheaper, it costs less than your phone bill.

These aren't science fiction scenarios. They're applications of current model capabilities at price points that don't yet exist. The models can already do most of this work. The cost curve is the bottleneck.

The 10x / 100x / 1,000x Framework

Moore's Law didn't deliver its benefits in a smooth, continuous flow. It came in thresholds, price points at which qualitatively new applications became viable. The pattern with AI compute is likely to follow the same staircase function.

At 10x cheaper (plausible within 1-2 years): AI becomes viable for tasks that are currently "almost worth it." Small businesses that can't justify \$500/month for AI tooling find it worthwhile at \$50/month. Individual professionals (accountants, lawyers, doctors, engineers) integrate AI into their daily workflow not as an occasional tool but as a constant companion. The volume of AI-mediated work increases dramatically, but the character of work doesn't fundamentally change. This is the equivalent of the minicomputer era: the same kind of computing, available to more people.

At 100x cheaper (plausible within 3-5 years): The applications listed above become economically viable. Continuous analysis, full-codebase reasoning, personalized education, preventive medicine at scale. At this price point, AI stops being a tool you use and starts being infrastructure you run on. Every document you write gets reviewed. Every decision you make gets a second opinion. Every student gets a tutor. Every patient gets a diagnostician. The total volume of inference consumed per capita increases by far more than 100x, because new use cases emerge that weren't contemplated at the prior price. This is the personal computer moment: qualitatively new categories of use.

At 1,000x cheaper (plausible within 5-8 years): Intelligence becomes ambient and disposable. You don't think about whether to use AI for a task any more than you think about whether to use electricity for a task. Every appliance, every vehicle, every building, every piece of infrastructure has embedded reasoning running continuously. Your home understands your patterns and adapts. Your car negotiates traffic in real time not just with sensors but with models that predict the behavior of every other vehicle. Agricultural equipment analyzes soil conditions at the individual plant level. Supply chains optimize in real time across thousands of variables. This is the smartphone moment: computing so cheap and pervasive that it becomes invisible.

The Compounding Effect

There's a dynamic in AI cost reduction that didn't exist with traditional Moore's Law: cheaper inference enables better models, which enables even cheaper inference.

When inference is expensive, researchers are constrained in how they can train and evaluate models. Each experiment costs real money. Each architecture search consumes significant compute budgets. When inference costs drop, researchers can run more experiments, evaluate more architectures, and discover more efficient approaches, which further reduces costs. Distillation (training a smaller model to mimic a larger one) becomes more practical when the larger model is cheaper to run at scale. Synthetic data generation (using AI to create training data for other AI) becomes more economical. The cost reduction compounds on itself.

This is already happening. GPT-4 was used to generate synthetic training data for GPT-4o. Claude's training pipeline uses prior Claude models to evaluate and filter training examples. Google's Gemini models help design the next generation of TPU chips that will run future Gemini models. The AI equivalent of "using computers to design better computers" arrived in year three of the current wave, decades earlier in the relative timeline than it took the semiconductor industry to reach the same recursive dynamic.

The implication is that the cost curve isn't just declining; it's declining at an accelerating rate because each improvement enables the next one. The semiconductor industry saw this acceleration plateau after about fifty years as it approached physical limits of silicon. AI has no equivalent physical constraint on the horizon. The limits are architectural and algorithmic, and those limits have been falling faster than hardware limits ever did.

What the Semiconductor Analogy Actually Predicts

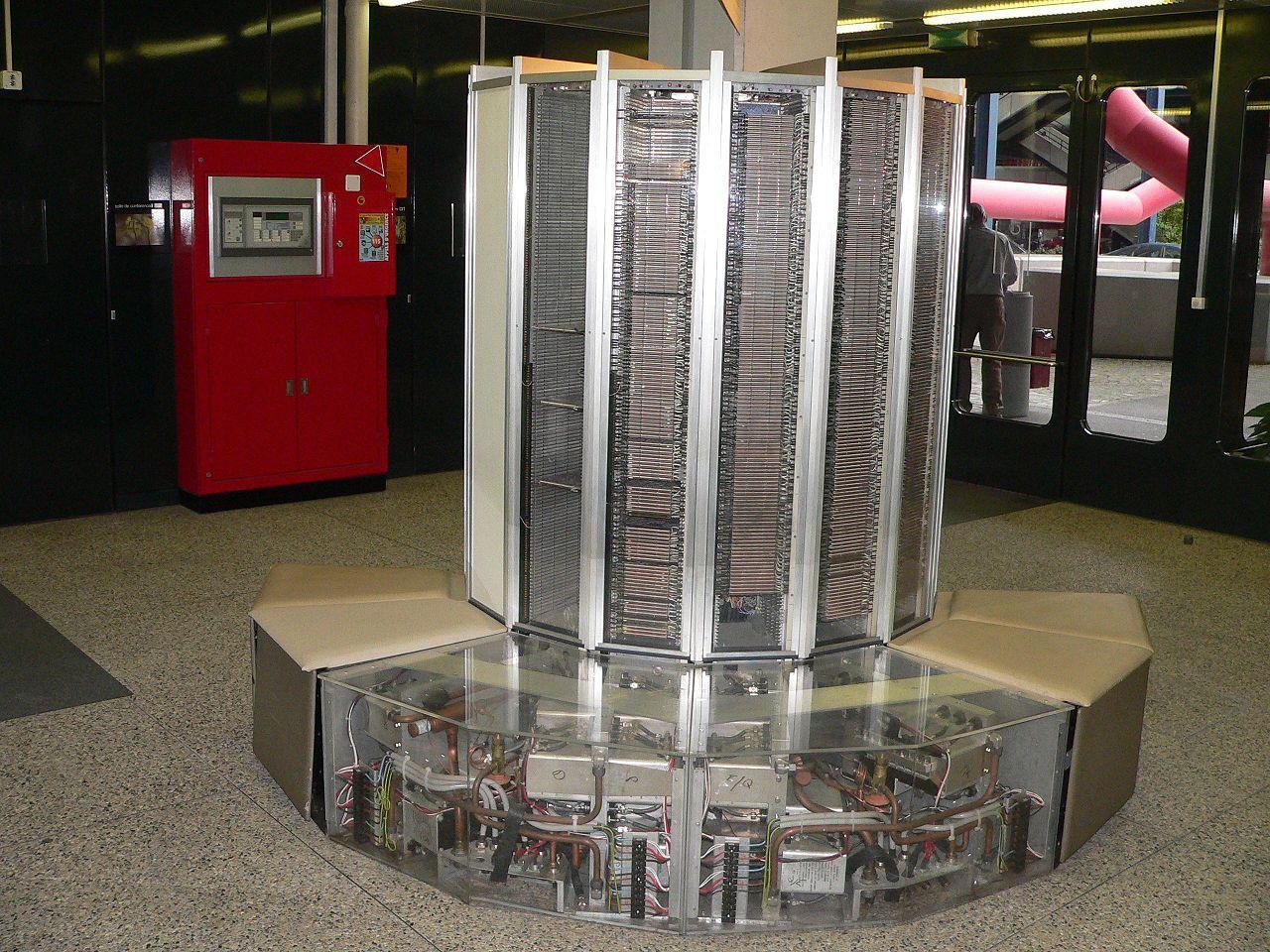

In 1975, a Cray-1 supercomputer delivered about 160 MFLOPS and cost \$8 million. In 2025, an iPhone delivers roughly 2 TFLOPS of neural engine performance and costs \$800. That's a 12,500x performance increase at a 10,000x cost decrease, a net improvement of roughly 100 million times in price-performance over fifty years.

Nobody in 1975 predicted Instagram, Uber, Google Maps, or Spotify. Not because these applications required fundamentally new physics; they just required compute that was cheap enough to run in a device that fit in your pocket. The applications were latent, waiting for the cost curve to reach them.

The history is instructive at each threshold. When a capable computer crossed below \$20,000 in the early 1980s, it unlocked small business accounting, the same work mainframes did, just for smaller organizations. When it crossed below \$2,000 in the mid-1990s, it unlocked home computing, and with it the web browser, email, and e-commerce. When capable compute crossed below \$200 in the smartphone era, it unlocked ride-sharing, mobile payments, and social media, none of which had any conceptual precursor at the \$20,000 price point. Each 10x reduction didn't just expand the existing market. It created a market that was literally unimaginable at the prior price.

The same principle applies to intelligence. We are in the mainframe era of AI. The applications we see today (chatbots, code assistants, image generators) are the equivalent of payroll processing and scientific computation on 1960s mainframes. They are real and valuable, but they represent a tiny fraction of what becomes possible when the cost drops by five or six orders of magnitude.

What are the Instagram and Uber equivalents of cheap intelligence? By definition, we can't fully predict them. But we can identify the structural conditions that will enable them:

When intelligence costs less than attention, delegation becomes default. Today, the cognitive cost of formulating a good prompt, evaluating the output, and iterating often exceeds the cost of just doing the task yourself. As models get cheaper, faster, and better at understanding context, the threshold shifts. Eventually, not delegating a cognitive task to AI becomes the irrational choice, the way not using a calculator for arithmetic became irrational.

When intelligence costs less than data storage, everything gets analyzed. Today, most data that organizations collect is never analyzed. It's stored, archived, and forgotten, because the cost of human analysis exceeds the expected value of the insights. When AI analysis is effectively free, every dataset gets examined. Every log file gets reviewed. Every customer interaction gets analyzed for patterns. The volume of insight generated from existing data increases by orders of magnitude.

When intelligence costs less than communication overhead, organizations restructure. This is already starting. A significant fraction of white-collar work is coordination: meetings, emails, status updates, project management. These exist because humans need to synchronize their mental models of shared projects. AI tools are already compressing this layer: meeting summaries that eliminate the need for half the attendees, project dashboards that maintain themselves, codebases where an AI agent tracks the state of every open issue so developers don't have to sit through standup. When AI can maintain a comprehensive, always-current model of a project's state, much of the coordination overhead that justifies entire job categories (project managers, program managers, business analysts, internal consultants) begins to evaporate. An organization that currently needs 50 people to coordinate a complex project might need 10, with AI handling the information synthesis that previously required human intermediaries. That's a genuine productivity gain. It's also 40 people who need to find something else to do, and the honest answer is that we don't yet know how fast the demand side creates new roles to absorb them.

The Demand Expansion Is the Story

The instinct when hearing "AI gets 1,000x cheaper" is to think about cost savings. That's the substitution frame: doing the same things for less money. And yes, that will happen. But the semiconductor analogy tells us that cost savings are the boring part of the story.

When compute got 1,000x cheaper between 1980 and 2000, the interesting story wasn't that scientific simulations got cheaper to run. It was that entirely new industries (PC software, internet services, mobile apps, social media, cloud computing) emerged to consume orders of magnitude more compute than the entire prior industry had used. The efficiency gain on existing applications was dwarfed by the demand expansion from new applications.

The same will likely be true for intelligence. Consider bandwidth as a parallel case. In 1995, a 28.8 kbps modem made email and basic web pages viable. Nobody was streaming video; it was physically impossible at that bandwidth, not merely expensive. By 2005, broadband had made streaming music viable. By 2015, streaming 4K video was routine. By 2025, cloud gaming and real-time video conferencing were infrastructure-level assumptions. Total bandwidth consumption didn't decline as it got cheaper. It increased by roughly a million times, because each generation of cost reduction enabled applications that consumed orders of magnitude more bandwidth than the previous generation's entire output.

The interesting story isn't that customer support gets cheaper. It's the applications that are currently impossible (not difficult, not expensive, but literally impossible at current price points) that become not just possible but routine.

A world where every small business has a CFO-grade financial analyst. Where every patient has a diagnostician who has read every relevant paper published in the last decade. Where every student has a tutor who knows exactly where they're struggling and why. Where every local government has the analytical capacity currently reserved for federal agencies.

And the nature of building software itself is changing in ways that go beyond "engineers with better tools." For most of computing history, writing code meant a human translating intent into syntax, line by line, function by function. AI assistance started as autocomplete: suggesting the next line, filling in boilerplate. But that phase is already ending. Today, with tools like Claude Code, the workflow has inverted. The human describes what they want (an architecture, a feature, a behavior) and the AI writes the implementation across files, runs the tests, and iterates on failures. The engineer's role shifts from writing code to directing and reviewing it, from syntax to judgment. At 10x cheaper, this is how professional developers work. At 100x cheaper, it's how small teams build products that previously required departments. At 1,000x cheaper, the barrier between "person with an idea" and "working software" functionally disappears. The entire concept of what it means to be a software engineer is being rewritten in real time, not by replacing engineers, but by redefining the skill from "can you write this code?" to "do you know what to build and why?"

These aren't efficiency improvements on existing systems. They're new capabilities that create new categories of economic activity, new forms of organization, and new kinds of products and services that don't have current analogs, just as social media, ride-sharing, and cloud computing had no analogs in the mainframe era.

The Question That Matters

I should be honest about what I don't know. The displacement scenarios for white-collar labor are not fantasy. AI is already capable enough to handle work that was solidly middle-class professional territory two years ago: document review, financial analysis, code generation, customer support, content production. The scenarios where this accelerates faster than the economy can absorb are plausible, and anyone who dismisses them outright isn't paying attention. When a technology can replicate cognitive labor at a fraction of the cost, the transitional pain for the people whose livelihoods depend on that labor is real and potentially severe. The speed matters: prior technology transitions unfolded over decades, and AI compression of that timeline into years is a genuine uncertainty that historical analogy doesn't fully resolve.

But there is a question that displacement scenarios consistently underweight, and it's the one I explored in my Jevons counter-thesis: what happens on the demand side? Every model that projects mass unemployment from cheap AI is implicitly assuming that the economy remains roughly the same size, with machines doing the work humans used to do. That's the substitution frame. And the substitution frame has been wrong at every prior technological inflection point, not slightly wrong, but wrong by orders of magnitude.

The semiconductor industry's answer, delivered over sixty years of data, is unambiguous. Every order-of-magnitude cost reduction generated more economic activity, more employment, and more total compute consumption than the one before it. The economy didn't shrink as compute got cheaper. It restructured around cheap compute and grew. Roughly 80% of Americans who need legal help can't afford it today. Personalized tutoring is a luxury good. Custom software is out of reach for most small businesses. These aren't speculative markets; they're documented unmet demand suppressed by the cost of human intelligence. When that cost collapses, the demand doesn't stay static.

The honest answer is that both things will happen simultaneously. Jobs will be displaced, some permanently. And new categories of economic activity will emerge that are currently inconceivable, just as social media and cloud computing were inconceivable in the mainframe era. The question is which force dominates, and how fast the transition occurs. I think the historical pattern favors demand expansion, but I hold that view with the humility of someone who knows the speed of this particular transition is unprecedented.

AI inference costs are following the same curve as semiconductors, possibly faster. The tokens-per-dollar ratio will improve by orders of magnitude. And when it does, the applications that emerge will make today's AI use cases look as quaint as running payroll on a room-sized mainframe.

The thought experiment ends where all Jevons stories end: with more consumption, not less. More intelligence deployed, not less. More economic activity built on cheap cognition, not less. The cost curve is the enabling condition. What gets built on top of it is the part we can't fully predict, and historically, that's always been the most interesting part.