Matt Shumer's "Something Big Is Happening" has been making the rounds, forwarded by founders, reposted by VCs, shared by worried parents and recent graduates. If you haven't read it, the core argument is straightforward: AI capabilities are advancing at an unprecedented pace, the public doesn't appreciate how fast things are moving, and roughly half of entry-level white-collar jobs will be displaced within one to five years. He frames this as a personal warning to the non-technical people in his life, drawing an explicit parallel to February 2020, the moment before COVID when the warnings were there but most people weren't listening.

It is a well-written, earnest piece, and it resonated for a reason. The capability gains are real. The perception gap is real. The practical advice is genuinely useful. Shumer deserves credit for engaging seriously with a question that most people in his position (CEO of an AI company) have financial incentives to either hype or deflect.

But the piece has a hole in the center of it, and it's the same hole that appears in nearly every AI displacement argument I've encountered. I've written about this through the lens of Jevons Paradox, explored it as a direct counter-thesis to displacement scenarios, and examined what happens when you apply Moore's Law to the cost of intelligence itself. The pattern is consistent, and Shumer's piece reproduces the analytical error at its core: it models what AI replaces without modeling what AI creates.

The Steelman

Before critiquing the piece, I want to present its strongest version in good faith, because Shumer gets several important things right, and dismissing the argument wholesale would be intellectually lazy.

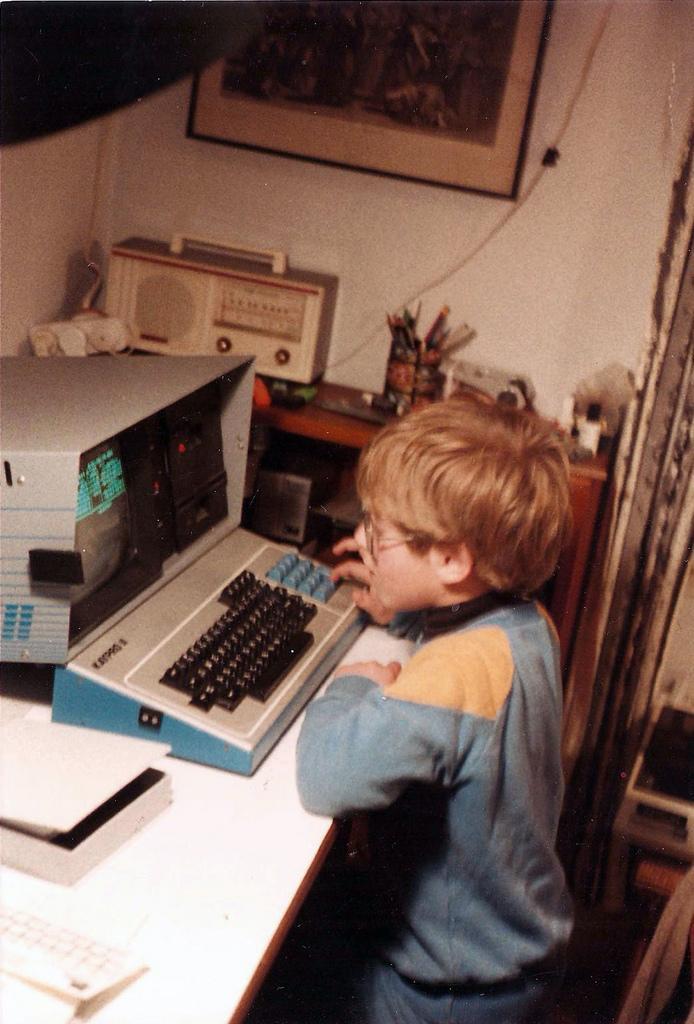

The capability curve is real. METR benchmarks show AI task completion doubling roughly every seven months, possibly accelerating. Shumer cites this data, and it's legitimate. I've experienced the curve firsthand. Over the past year and a half, I've built a high-performance Rust-based ballistics engine and Lattice, an entire programming language with a novel phase-based type system, working across GPT-4, GPT-4o, Claude Haiku, Opus, and most recently Opus 4.6 with Claude Code. The progression itself is the data point. Early models could help with fragments. Today's frontier models reason across thousands of lines of interconnected code, tracking type systems, managing compiler passes, understanding how changes in one module ripple through the rest. These aren't toy demos. They're production-quality projects where the AI operated at the architectural level. The capability gap between late 2024 and early 2026 is genuinely striking.

The perception gap is real too. Shumer makes a point that doesn't get enough attention: most people's experience with AI is limited to free-tier models that lag frontier capabilities by twelve months or more. Someone who tried ChatGPT once in 2024 and found it mediocre is extrapolating from hardware that's already obsolete. The gap between the free experience and the paid frontier experience is larger than most people realize, and Shumer is right to flag it.

The self-improvement feedback loops are real. OpenAI has stated that GPT-5.3 Codex was "instrumental in creating itself." Anthropic's training pipeline uses prior Claude models to evaluate training examples. Dario Amodei predicts AI autonomously building next-generation versions within one to two years. These aren't speculative claims; they're descriptions of current practice, and they compress the improvement timeline.

Shumer's practical advice is sound: use paid tools, select the best available models, spend an hour a day experimenting, build financial resilience, develop adaptability as a core skill. This is good counsel regardless of how the macro picture unfolds.

And the urgency is not manufactured. Whatever you think the economic consequences will be, the pace of capability improvement is unprecedented in the history of technology. Shumer is right that most people are not paying attention. Where he goes wrong is in what he concludes from that observation.

The Substitution Fallacy

Here is Shumer's core analytical error, and the one that most critiques of his piece also miss.

He treats "AI can do X" as equivalent to "AI will replace all humans doing X." His piece moves through a list of job categories (legal work, financial analysis, software engineering, medical analysis, customer service) and for each one, the logic is: AI can now perform this work at a level that rivals human professionals, therefore the humans performing this work are at risk. Implicit in this framing is the assumption that the economy stays roughly the same size, with machines doing work that humans used to do. The number of legal analyses needed stays constant. The number of financial models stays constant. The amount of software stays constant. AI just does it cheaper.

This is the substitution frame, and it has been wrong by orders of magnitude at every prior technological inflection point.

I explored this in detail in my Jevons counter-thesis. The mechanism is straightforward: when a critical input becomes dramatically cheaper, the addressable market for everything that uses that input expands. New use cases emerge that were previously uneconomical. Existing use cases scale to populations that were previously priced out. Total consumption of the now-cheaper input rises even as the per-unit cost falls.

The numbers on latent demand are not speculative. Roughly 80% of Americans who need legal help cannot afford it. Personalized tutoring is a luxury good; \$50 to \$100 per hour puts it out of reach for the average family. Custom software development, at \$50,000 or more per engagement, is inaccessible to most small businesses. Personalized financial planning is available only to households with six-figure investable assets. These aren't hypothetical markets. They are documented, unmet demand suppressed by the cost of the human intelligence required to serve them.

When Shumer writes that his lawyer friend finds AI "rivals junior associates" for contract review and legal research, the Jevons question is: what happens when legal analysis costs one-hundredth what it costs today? The answer isn't "lawyers lose their jobs." It's "hundreds of millions of people who currently have zero legal representation suddenly have access to it." The total volume of legal analysis performed doesn't shrink. It explodes. Whether that explosion employs as many human lawyers as today is a genuine question, but it's a very different question from "AI replaces lawyers," and Shumer's piece never asks it.

The same logic applies to every category on his list. If financial modeling becomes 100x cheaper, every small business gets CFO-grade analysis, a market expansion of orders of magnitude relative to the current financial services industry. If software development becomes 100x cheaper, the barrier between "person with an idea" and "working application" functionally disappears, and the total volume of software produced doesn't shrink; it expands to include millions of applications that nobody would build at current costs.

The Pandemic Analogy Problem

"Think back to February 2020." It's an emotionally effective opening, and it does exactly what Shumer intends; it activates the memory of a time when the warnings were there but most people didn't act until it was too late. As a rhetorical device, it works. As an analytical framework, it's misleading.

COVID was a pure externality. It destroyed without creating. A virus doesn't generate new economic activity as it spreads. It imposes costs, disrupts supply chains, and kills people. The appropriate response was defensive: stockpile supplies, get vaccinated, stay home. The framing of individual survival (how do I get through this) was correct for a pandemic because a pandemic doesn't create opportunity. It just destroys.

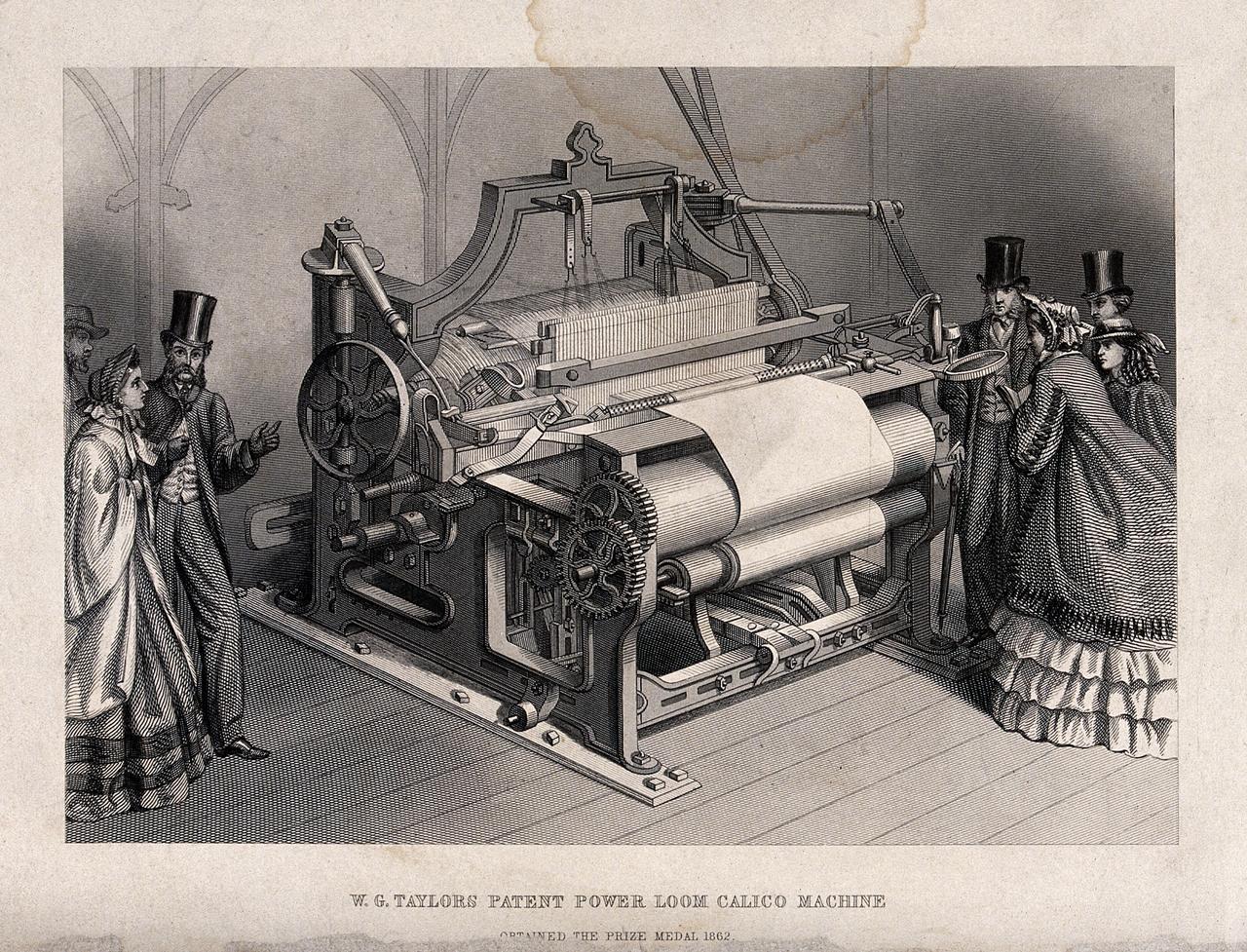

Technology transitions are categorically different. They create as they destroy, and historically, the creation overwhelms the destruction. The better analogy (the one Shumer doesn't use) is the semiconductor revolution. Computing destroyed millions of clerical, typist, switchboard operator, and filing clerk jobs. It also created the software industry, the internet economy, the mobile ecosystem, social media, cloud computing, e-commerce logistics, and millions of roles that had no conceptual precursor in the prior economy. Total employment didn't shrink. It restructured and grew.

The pandemic analogy does something else that's analytically costly: it frames the correct response as individual survival. How do I prepare? How do I stay ahead? This is the right question for a virus. It is the wrong question for a technology transition, where the correct frame is not "how do I survive displacement" but "what new things become possible?" Shumer's advice (use the tools, build adaptability, experiment daily) is good advice. But it's embedded in a survivalist frame that misses the larger economic picture. The person who learned to build websites in 1995 wasn't surviving the death of typesetting. They were participating in the creation of something that would be orders of magnitude larger than the industry it disrupted.

A Founder's Experience Is Not the Economy

"I describe what I want built, in plain English, and it just appears."

I believe him. I've had similar experiences. When I built the ballistics engine and Lattice, there were moments where the workflow felt qualitatively different from anything I'd experienced in over three decades of writing software. The capability is real and it's striking.

But Shumer is generalizing from the thinnest part of the adoption curve. A startup founder building prototypes with frontier AI tools is the absolute highest-leverage, lowest-friction use case for current technology. There are no compliance departments. No regulatory review. No integration with legacy systems built on COBOL. No liability frameworks that require a human signature. No union contracts. No procurement cycles measured in fiscal years.

The gap between "a founder can build a prototype in an afternoon" and "a hospital deploys AI-driven diagnostics at scale" is measured in years, not months. Regulatory friction, institutional inertia, liability requirements, and cultural resistance are real. The FDA doesn't move at startup speed. Neither do insurance companies, government agencies, or school districts. These aren't trivial obstacles; they're the mechanisms through which society manages risk, and they exist for reasons.

I don't want to overweight this argument. Institutional friction can be overstated, and appeals to regulation can become a way of avoiding engagement with the underlying capability shift. The important point is narrower: Shumer extrapolates from his personal productivity gain to "nothing done on a computer is safe," and that's an extrapolation error. A founder experiencing sudden personal leverage and projecting that curve onto civilization is a recognizable pattern in tech commentary. It's usually too bullish on the timeline and too narrow on the mechanism.

What Gets Created

This is the biggest gap in Shumer's piece, and the biggest gap in most commentary on AI and employment. He spends thousands of words on what AI can replace. He spends zero words on what AI makes possible for the first time.

I examined this through the Moore's Law for Intelligence framework: the 10x / 100x / 1,000x staircase of what becomes viable as the cost per unit of machine intelligence drops. The historical pattern from semiconductors is unambiguous: each order-of-magnitude cost reduction didn't just make existing applications cheaper. It created entirely new categories of economic activity that were literally unimaginable at the prior price point.

Nobody in 1975 predicted Instagram, Uber, or Spotify. Not because they required new physics; they required compute cheap enough to fit in a pocket. The applications were latent, waiting for the cost curve to reach them.

The same is true for intelligence. We can identify the structural conditions for demand expansion even if we can't predict the specific applications:

Small businesses with CFO-grade financial analysts, not because they hire CFOs, but because AI makes that analysis accessible at \$50 per month instead of \$200,000 per year. Personalized tutoring for every student, not an incremental improvement on existing education, but a qualitative shift in how learning works for the 95% of families who can't afford human tutors. Legal help for the 80% of Americans currently priced out. Preventive medicine embedded in every checkup, where an AI has read every relevant paper published in the last decade and cross-referenced it against the patient's complete history.

And the nature of software engineering itself is changing, not replacing engineers but redefining the skill. The workflow is already shifting from "write code line by line" to "describe architecture, direct implementation, review output." At 100x cheaper inference, small teams build products that previously required departments. At 1,000x cheaper, the barrier between having an idea and having working software effectively disappears. That's not displacement of engineers; it's an explosion in the total volume of software that gets built, and it requires people who know what to build and why.

We can't predict the Instagram of cheap cognition. But we can observe that the structural conditions (massive latent demand, rapidly falling costs, intense competition distributing gains to consumers) are identical to the conditions that preceded every prior wave of demand-driven economic expansion.

The Speed Question: Where Shumer Is Strongest

The legitimate uncertainty in Shumer's argument isn't whether displacement will happen. It will. The question is whether it happens faster than demand expansion can absorb.

Prior Jevons cycles unfolded over decades. Agricultural mechanization displaced 90% of farm workers over a century. Computerization restructured white-collar work over roughly forty years. If AI compresses displacement into two to three years, the question of whether demand expansion keeps pace becomes genuinely urgent. This is where Shumer's urgency has teeth, and it's the argument I take most seriously.

I was honest about this in both the counter-thesis and the Moore's Law piece: the speed of this transition is unprecedented, and historical analogy doesn't fully resolve the timing question. The transitional pain for people whose livelihoods depend on cognitive labor that AI can replicate is real and potentially severe.

But notice the asymmetry in Shumer's framing. Disruption happens at AI speed: step-function capability jumps, immediate adoption, rapid displacement. Demand expansion, on the other hand, is treated as essentially static or non-existent. The economy absorbs the shock and contracts. End of story.

This asymmetry isn't supported by the evidence. The smartphone created a trillion-dollar app economy in under five years. Cloud computing spawned tens of thousands of SaaS companies within a decade. When a critical input becomes 100x cheaper, entrepreneurs move fast, because the profit opportunity is enormous. Shumer's own experience is evidence of this: he's a founder building products at unprecedented speed using AI tools. Scale that behavior across millions of entrepreneurs who suddenly have access to capabilities that were previously reserved for well-funded teams, and the demand side moves faster than any prior technology transition.

The honest answer is that we don't know whether demand expansion will keep pace with displacement. That's a genuine uncertainty. But Shumer presents it as a foregone conclusion in one direction, displacement wins, full stop, and that's not an evidence-based position. It's a bet against the strongest empirical pattern in economic history.

What to Take from This

Shumer's practical advice to individuals is sound even if his macro analysis is incomplete. Use the tools. Build adaptability. Experiment daily. Don't ignore the capability curve; it's real, it's fast, and it will restructure how cognitive work gets done.

But don't mistake a substitution-only model for the full picture. The most consistent empirical pattern in economic history is that when a critical input gets dramatically cheaper, total consumption increases and the economy restructures around the cheaper input. Coal. Transistors. Bandwidth. Lighting. Every time, the predictions that efficiency would destroy demand were wrong, not because displacement didn't happen, but because demand expansion overwhelmed it. Betting that this pattern has finally broken requires an extraordinary burden of proof that Shumer's piece (eloquent, urgent, and emotionally resonant as it is) does not meet.

Something big is happening. What's missing from the conversation is the other half of it.