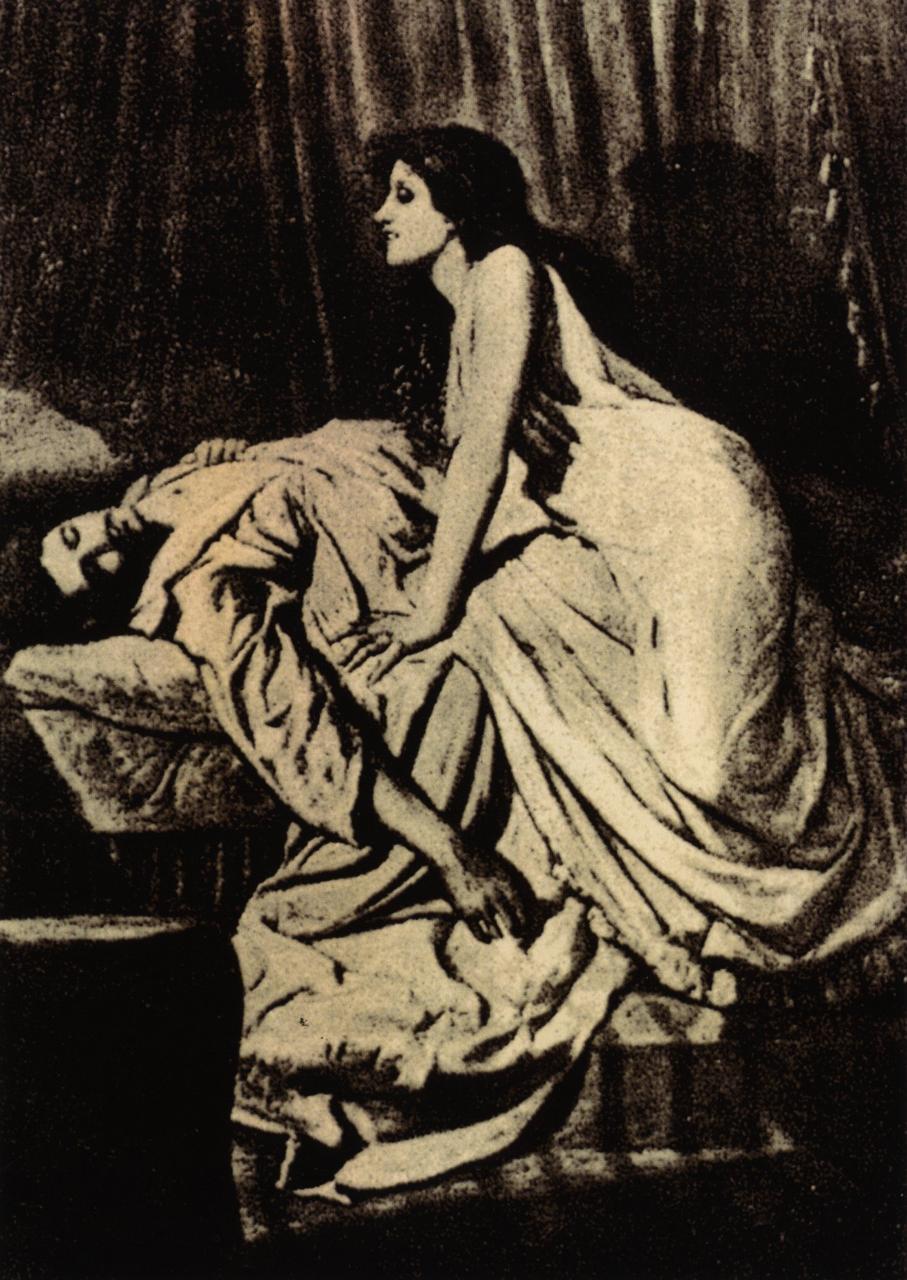

Steve Yegge's "The AI Vampire" has been circulating among developers and managers for the past few weeks, and it's striking a nerve. The core argument: AI makes you dramatically more productive (Yegge estimates 10x or more) but companies capture the entire surplus. You don't get a shorter workday. You get 10x the output at the same hours, with the cognitive load compressed into pure decision-making. The result is burnout on a scale the industry hasn't seen before. His prescription is blunt: calculate your \$/hr, work three to four hours a day, and refuse to let the vampire drain you dry.

It's a compelling piece, written with Yegge's characteristic directness and self-awareness. And it describes something real. But as I read it, I kept seeing something he doesn't name, a pattern I've been writing about for months.

This is the fourth piece in what has become a series on Jevons Paradox and AI economics. The first traced the paradox through the semiconductor industry. The second argued that AI displacement scenarios systematically undercount demand expansion. The third explored what happens when the cost of intelligence follows a Moore's Law trajectory. Along the way, I responded to Matt Shumer's displacement argument with the same framework.

Those pieces all looked at the macro picture: markets expanding, new industries forming, total economic activity growing. Yegge is describing the micro picture. What it actually feels like to be a human worker inside a Jevons expansion. And what he's describing, whether he uses the term or not, is Jevons Paradox operating on human attention.

The Jevons Pattern, One More Time

The pattern is simple enough to state in a sentence: when a critical input gets cheaper, demand expands beyond the efficiency gain. Total consumption of the input rises, not falls.

Coal got cheaper per unit of useful work. Total coal consumption surged as new applications became viable. Transistors got cheaper per unit of compute. Total compute spending grew by orders of magnitude. Bandwidth got cheaper per unit of data. Total data consumption exploded. The per-unit savings are overwhelmed by the explosion in total units demanded.

In my previous pieces, I applied this at the macro level. Cognitive output gets cheaper through AI. New industries emerge. Demand for cognitive work expands. The economy restructures around abundant, cheap intelligence. That argument is about markets, GDP, and employment categories: the aerial view.

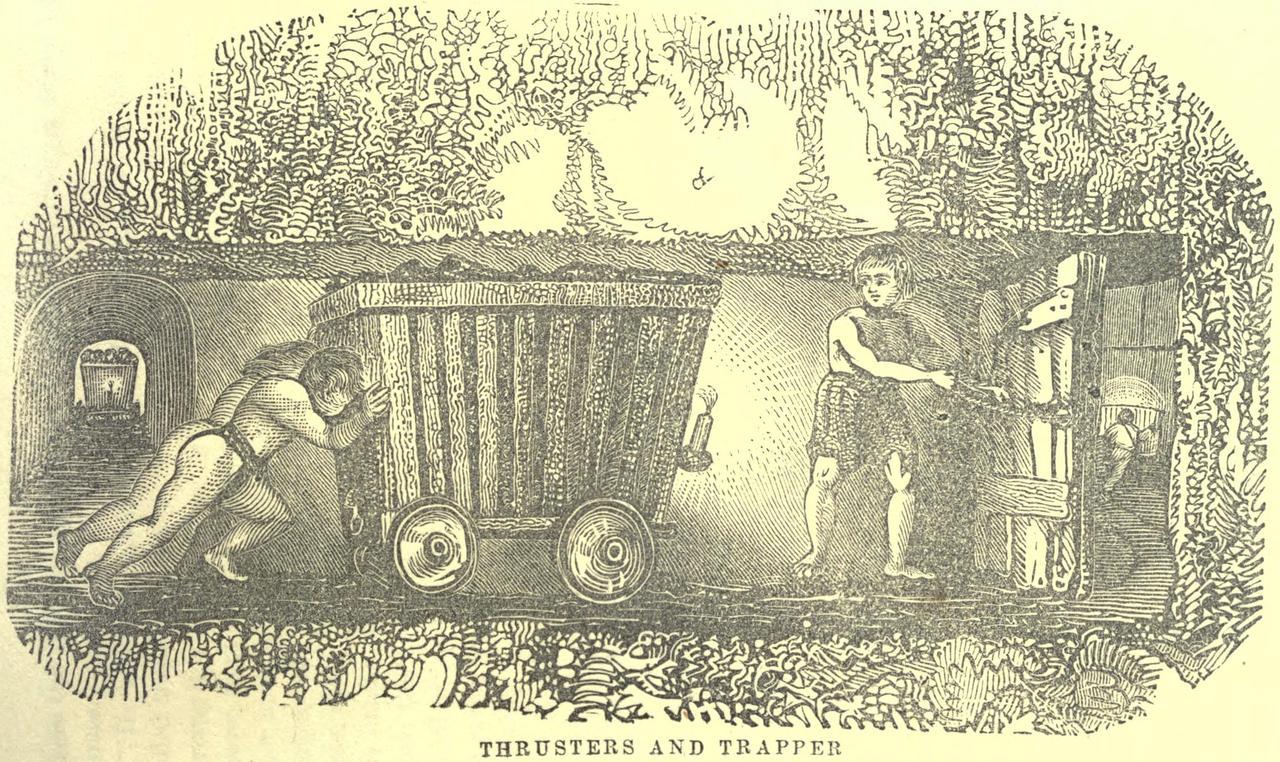

But Jevons has always had a micro counterpart. When coal got cheaper, individual mines didn't shut down early; they ran harder, longer, extracting more because the economics now justified it. When compute got cheaper, individual developers didn't write less code; they wrote vastly more, because the constraints that had limited what was practical evaporated. The expansion creates pressure at every level of the system, not just at the top.

The macro story is about new markets forming. The micro story is about what happens to the people at the point of production, the ones whose labor is the input that just got cheaper.

What Yegge Is Actually Describing

Yegge's framework centers on a value-capture trap. He presents two scenarios:

Scenario A: AI makes you 10x more productive. Your company captures the surplus. You now produce 10x the output at the same salary and hours. The company benefits. You burn out.

Scenario B: You recognize the \$/hr math. If you were worth \$150/hr before AI and now produce 10x the output, your effective rate should be \$1,500/hr, or equivalently, you should work one-tenth the hours for the same salary. You work three to four hours a day, produce what used to take a full day, and keep your sanity.

He frames this as a choice between being exploited and being strategic. And he's honest about the difficulty of Scenario B; most people can't negotiate a three-hour workday, most companies won't accept it, and the competitive dynamics push relentlessly toward Scenario A.

Yegge's most vivid metaphor is that "AI has turned us all into Jeff Bezos." At Amazon, Bezos sat atop a machine that handled volume (logistics, warehousing, customer service, shipping) while he focused exclusively on high-leverage decisions. AI does the same thing for individual workers. It absorbs the volume work (the boilerplate code, the routine analysis, the standard responses) and leaves you with a residue of pure judgment calls. Every decision is consequential. Every hour is cognitively expensive.

He also has an important moment of self-awareness. Yegge acknowledges that his own experience (forty years of engineering, unlimited AI tokens, deep familiarity with the tools) represents "unrealistic beauty standards" for the average developer. He's the equivalent of the fitness influencer whose workout routine is their full-time job. Most people don't have his context, his autonomy, or his leverage to negotiate Scenario B.

And he identifies a crucial accelerant: the startup gold rush. AI has made it cheap enough to launch a company that "a million founders are chasing the same six ideas." This intensifies competition, which intensifies the pressure to push the output dial higher, which feeds the vampire.

The Jevons Connection

Here's what Yegge is describing in Jevons terms.

AI makes cognitive output dramatically cheaper. Jevons predicts that demand won't fall in response; it will increase. That's exactly what happens. Companies don't say "same output, fewer hours." They say "10x the output, same hours." The efficiency gain doesn't reduce consumption of the input. It increases consumption. This is the paradox, and it is playing out precisely as the model predicts.

But there's something different about this Jevons cycle, something that doesn't have a precedent in the historical cases.

Coal doesn't get tired. Transistors don't burn out. Bandwidth doesn't need a nap. Every prior Jevons cycle involved an inert input. You could mine more coal, fabricate more chips, lay more fiber. When demand expanded, supply expanded to meet it, and the system found a new equilibrium at higher volume. The input didn't resist. It didn't have a biological ceiling.

Human attention does.

AI creates a concentration effect that Yegge describes precisely: it absorbs high-volume, routine work and leaves humans with a residue of pure judgment. The judgment work is, by definition, the most cognitively expensive kind of work, the kind that requires deep focus, contextual understanding, and the willingness to be wrong. And demand for this judgment work expands Jevons-style as AI makes the overall process cheaper. More projects get launched. More code gets written. More decisions need to be made. The volume of judgment calls scales with the volume of output, even as AI handles everything else.

The problem is that the biological supply of deep, focused judgment is fixed. The deep work literature (Cal Newport and others have documented this extensively) converges on roughly three to four hours per day as the upper bound for sustained, cognitively demanding work. This isn't a cultural preference or a lifestyle choice. It's a constraint imposed by neurobiology. Attention is a depletable resource that recovers on a fixed biological schedule.

This is the first Jevons cycle where expanding demand hits a hard biological ceiling on the input.

Yegge's startup observation is also a Jevons phenomenon. AI made starting a company cheaper, so the number of startups exploded. More startups means more competition. More competition means more pressure to maximize output per person. The expansion creates its own acceleration, a feedback loop where cheaper cognitive output produces more ventures, which produce more demand for cognitive output, which increases the pressure on the humans in the loop.

And the "unrealistic beauty standards" problem has a Jevons name too: it's the efficiency benchmark effect. In every Jevons cycle, the most efficient user of the cheaper input sets the competitive pace for everyone else. The factory that adopted steam power first forced every competitor to adopt it or die. The company that adopted AI first forces every competitor to match its output-per-employee or lose. Yegge, with his forty years and unlimited tokens, is the equivalent of the first factory with a Watt engine. His output level becomes the standard against which everyone is measured, even though most people can't replicate his efficiency.

Where the Ceiling Matters

In every prior Jevons cycle, the resolution was supply expansion. Coal demand surged; mine more coal. Compute demand surged; fabricate more chips. Bandwidth demand surged; lay more fiber. The system found equilibrium at higher volume because the input could scale.

Human cognitive capacity doesn't scale. You can't mine more judgment. You can't fabricate more attention. The three-to-four-hour ceiling on deep work isn't going to move because a company's OKRs demand it.

This means a Jevons expansion in demand for human judgment has to resolve differently than prior cycles. There are really only three paths:

Better tooling that reduces the judgment burden. AI gets good enough to handle more decisions autonomously, pushing the human-in-the-loop threshold higher. The frontier of what requires human judgment retreats as AI capability advances. This is already happening; the boundary between "AI can handle this" and "a human needs to decide" is moving rapidly. But it's not moving fast enough to outpace the demand expansion, which is why Yegge's burnout observation is accurate right now even if the long-term trajectory favors less human involvement.

Organizational restructuring. More people, fewer high-stakes decisions each. Instead of one developer making judgment calls on 10x the output, you have three developers each handling a manageable portion. This is the "hire more" response, and it pushes back against the cost-reduction motive that drives Scenario A. Companies that pursue this path may produce better outcomes but at higher cost, which competitive dynamics tend to punish.

Cultural pushback. Yegge's \$/hr formula. Workers internalize the fixed-supply economics of their own attention, price it accordingly, and refuse to let demand expansion drain it below sustainable levels. This is individually rational but collectively difficult; it requires either enough leverage to negotiate, or enough cultural shift to change expectations.

Yegge's \$/hr formula is, in Jevons terms, an attempt to set equilibrium for a fixed-supply resource. It is the cognitive equivalent of OPEC production quotas, an effort to prevent the price of a scarce input from being driven to zero by unconstrained demand. And like OPEC quotas, it works only if enough participants enforce it.

What This Means for the Macro Picture

I want to be honest about what Yegge's observation adds to the framework I've been building.

My previous pieces argued that when cognitive output gets cheaper, demand expansion will create new economic activity that exceeds the displacement. I stand by that argument. But I underweighted the human-in-the-loop constraint. The demand expansion is real: new markets form, new companies launch, total economic activity grows. But every unit of that expanded activity still requires some quantum of human judgment, and that judgment runs on biological hardware with a fixed daily capacity.

This doesn't invalidate the macro Jevons argument. Demand will expand. New industries will form. Total employment will restructure, not collapse. But the human attention constraint acts as a speed governor on the expansion. The economy can't scale cognitive output infinitely by just pushing the existing workforce harder, because the existing workforce has a biological ceiling on the input that matters most.

This argues for Yegge's three-to-four-hour workday not as a lifestyle aspiration but as something closer to an economic inevitability, the natural equilibrium point for a Jevons cycle operating on a fixed-supply input. When demand for an input exceeds the maximum sustainable rate of supply, the system must either find a substitute (AI handling more decisions autonomously), expand the supplier base (more workers, shorter hours each), or accept a constrained equilibrium (the three-hour workday). Some combination of all three is likely.

The interesting implication is that the Jevons expansion and the burnout crisis are not contradictory phenomena. They're the same phenomenon viewed from different vantage points. The macro analyst sees demand expanding and new economic activity forming. The individual worker sees an unsustainable cognitive load. Both are correct. They're describing different aspects of the same system adjusting to a radically cheaper input.

The Vampire and the Paradox

Matt Shumer worries about displacement, losing your job to AI. Steve Yegge worries about what happens to the people who aren't displaced, who keep their jobs but get vampired. Both are describing real phenomena. Neither is the whole picture.

The Jevons framework encompasses both. Demand expansion creates new work, answering Shumer's displacement concern: the economy doesn't contract, it restructures. But the expansion concentrates cognitive load on the humans who remain in the loop, confirming Yegge's burnout observation, because the one input AI can't replace is the one input that can't scale.

Shumer's error is modeling only the displacement side. Yegge's error is modeling only the extraction side. The full picture includes both: an economy producing vastly more cognitive output, creating genuinely new economic activity, while simultaneously pushing the humans at the center of it toward a biological wall.

The vampire is real. It's also, like every Jevons cycle, a signal that something genuinely new is being created, that demand is expanding into territory that didn't exist before. The burnout isn't incidental to the expansion. It's a symptom of it. And like every prior Jevons cycle, the system will find an equilibrium, not because anyone plans it, but because a fixed-supply input eventually forces one. The question is how much damage the vampire does before we get there.