There's a Zilog Z80 in a graphing calculator sitting in a high school classroom right now. The student using it was born around 2008. The Z80 was designed in 1976. That processor is older than the student's parents.

This isn't a quirky footnote. It's a pattern. The Z80, the Motorola 68000, the MOS Technology 6502, the Intel 8051: these processors have been in continuous production and active deployment for forty years or more. The Z80 is closing in on fifty. Meanwhile, processors that were objectively superior by nearly every technical measure (the Zilog Z8000, the National Semiconductor 32016, the Motorola 88000, the Intel i960) are footnotes in Wikipedia articles that nobody reads.

What determines whether a processor lives for decades or dies in five years? I've spent the last two years building Z80 emulators, writing compilers for the Z80, running CP/M on physical RetroShield hardware, and exploring the Motorola 68000 through TI calculators. I've read William Barden's 1978 handbook that was still being reprinted in 1985, and Steve Ciarcia's build-your-own guide that assumed you'd wire up a computer from discrete chips. The deeper I've gone into this world, the more convinced I've become that processor longevity isn't really about the processor. It's about everything around it.

The Survivors

Four processors stand out for their extraordinary longevity. Each was introduced in the mid-to-late 1970s. Each is still manufactured or cloned today.

The Zilog Z80 (1976) was designed by Federico Faggin and Masatoshi Shima, both of whom had worked on the Intel 4004 and 8080. The Z80 was explicitly designed as a better 8080, backward-compatible with the 8080's instruction set but adding indexed addressing, a second register bank, a built-in DRAM refresh counter, and a single 5V power supply (the 8080 needed three voltage rails). It became the heart of CP/M machines, arcade cabinets, and eventually TI graphing calculators. Zilog's CMOS variant, the Z84C00, was manufactured continuously until April 2024, when Littelfuse (Zilog's current owner) finally announced end-of-life after 48 years. The eZ80, a backward-compatible enhanced variant, continues in production, and third-party clones remain available. The Z80 instruction set isn't going anywhere even if the original silicon is.

The MOS Technology 6502 (1975) was designed by Chuck Peddle and Bill Mensch after they left Motorola. At \$25 when competing processors cost \$150-\$300, the 6502 was a revolution in affordability. It powered the Apple II, the Commodore 64, the Atari 2600, and the NES. Bill Mensch's Western Design Center still manufactures the W65C02S and W65C816S today, fifty years after the original design.

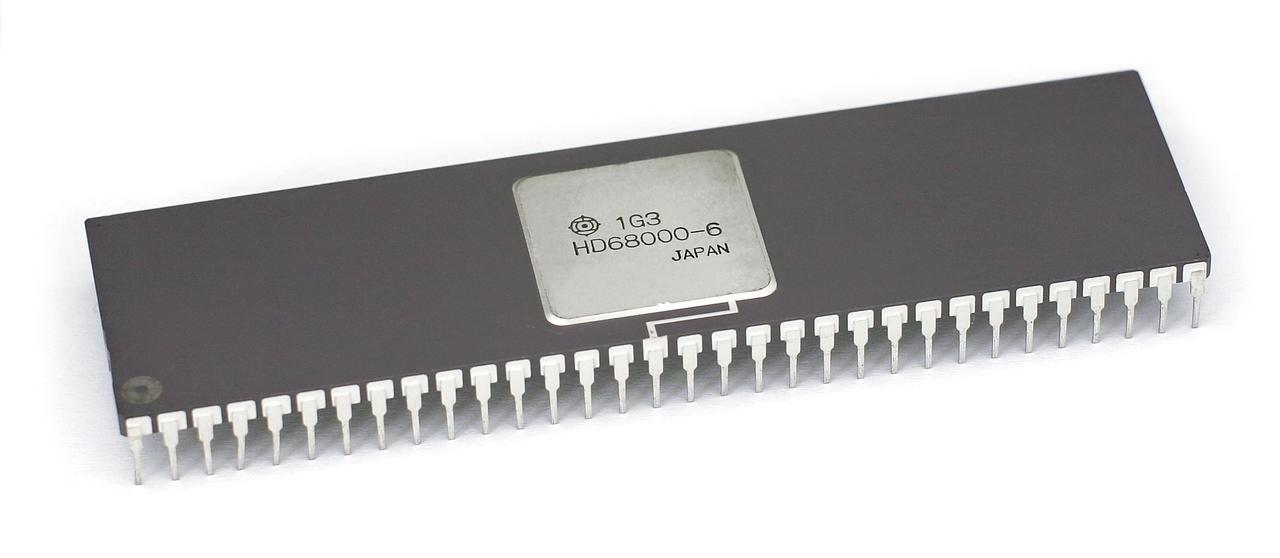

The Motorola 68000 (1979) was the 32-bit processor that arrived a generation early. With a linear 24-bit address space and an orthogonal instruction set that programmers genuinely enjoyed using, it became the foundation for the original Macintosh, the Amiga, the Atari ST, the Sega Genesis, and Sun's first workstations. Its descendants (the 68020, 68030, 68040, ColdFire, and now NXP's modern variants) kept the architecture alive in embedded systems, automotive controllers, and Texas Instruments calculators well into the 2020s.

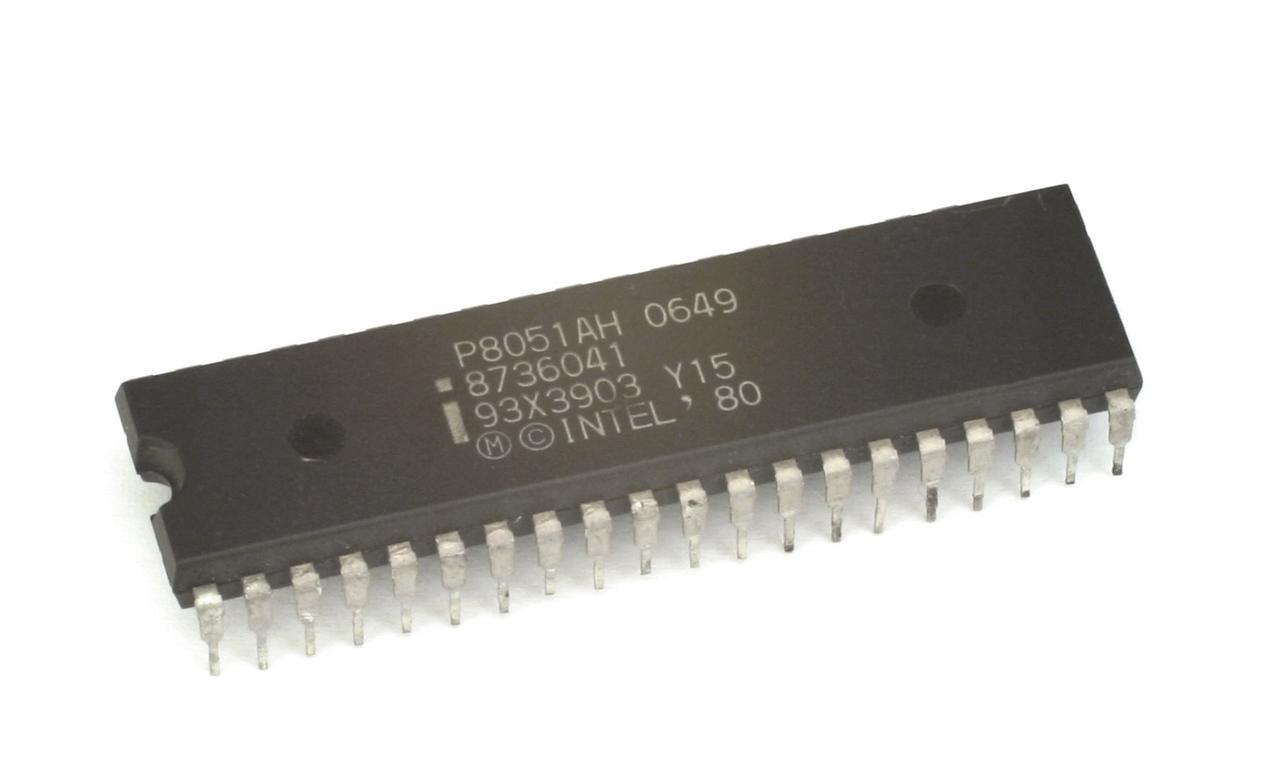

The Intel 8051 (1980) is perhaps the most quietly ubiquitous processor ever made. Designed as a microcontroller (a processor with RAM, ROM, timers, and I/O ports integrated on a single chip), the 8051 found its way into everything from washing machines to automotive engine controllers to industrial PLCs. Over two dozen companies have manufactured 8051 variants. If you've used an appliance, driven a car, or walked through a building with an elevator in the last forty years, you've interacted with an 8051 derivative.

The 8051 is also a case study in Jevons Paradox applied to silicon. As more manufacturers licensed and produced the 8051, unit costs fell. As unit costs fell, engineers designed it into applications that would never have justified a microcontroller at the original price: a toaster, a thermostat, a toy. Each new application expanded the market, which attracted more manufacturers, which drove costs lower still. The cycle fed itself for decades. Technically superior alternatives existed at every point along this curve, but they couldn't compete with an architecture whose ecosystem was compounding while their price-per-unit was still on the wrong side of the volume curve.

The Fallen

For every processor that lasted decades, dozens vanished. Some of these were technically impressive, arguably more capable than the survivors.

The Zilog Z8000 (1979), designed as the Z80's successor, offered a 16-bit architecture with segmented memory addressing. It was more powerful than the Z80 in every measurable way. It lasted roughly five years in the market before fading into obscurity. The segmented memory model (the same curse that plagued Intel's 8086/286) made programming painful. And critically, it wasn't backward-compatible with the Z80. Every Z80 program, every CP/M application, every line of existing code was useless on the Z8000. Zilog was asking customers to abandon their entire software investment.

The Motorola 88000 (1988) was Motorola's clean-sheet RISC design, intended to eventually replace the 68k family. It was technically excellent: pipelined, superscalar-capable, and well-designed. Motorola couldn't sell it. Customers had millions of lines of 68k code, working products, trained engineers, and proven toolchains. The 88000 offered better performance but required abandoning everything. Motorola eventually surrendered and joined IBM and Apple to create the PowerPC, which at least had the marketing muscle of three companies behind it.

The National Semiconductor 32016 (1982) was a full 32-bit processor at a time when the PC world was still on 16-bit. It was used in the Acorn Cambridge Workstation and a few other systems. It had bugs. The early silicon had errata that made reliable system design difficult. By the time National got the bugs out, the market had moved on.

The pattern is consistent: technical superiority alone doesn't determine survival.

Five Factors That Determine Processor Longevity

After spending years in this world, I've identified five factors that separate the survivors from the fallen. They're listed roughly in order of importance, which is not the order most engineers would expect.

1. Second-Sourcing and Licensing

This is the single most important factor, and it's the one that engineers consistently underrate because it's a business decision, not a technical one.

The Z80 was second-sourced by Mostek, SGS-Thomson, Sharp, NEC, Toshiba, Samsung, and others. When Littelfuse, the current owner of Zilog, finally discontinued the standalone Z84C00 in 2024, the instruction set didn't die, because it was never dependent on a single manufacturer. This is exactly what second-sourcing was designed to protect against. It mattered enormously to design engineers in the 1980s and 1990s, because committing a product design to a single-source processor was career-threatening. If your sole supplier had a fab fire, or went out of business, or simply decided to discontinue the chip, your product was dead.

The 6502 was licensed to multiple manufacturers: Rockwell, Synertek, GTE, and later CMD and the Western Design Center. The 8051 took this to its logical extreme: Intel actively encouraged licensing, and the architecture was eventually manufactured by Atmel, Philips/NXP, Silicon Labs, Dallas/Maxim, Infineon, and dozens more. The 8051 became less a product and more a standard, an instruction set architecture that any competent semiconductor company could implement. It was, in hindsight, a preview of the model that ARM and RISC-V would later formalize: sell the design, not the chip, and let the ecosystem do the rest.

The 68000 family was produced by Motorola, Hitachi, Signetics, Mostek, and Toshiba. Later, the ColdFire and subsequent architectures maintained enough compatibility to keep the ecosystem alive under Freescale and then NXP.

The x86 architecture tells the same story at a larger scale. IBM refused to use Intel's 8088 in the original PC without a second source. That requirement forced Intel to license the design to AMD, a decision Intel spent the next four decades regretting and litigating. But the resulting duopoly is a major reason x86 survived the RISC revolution of the 1990s. When Sun, SGI, and DEC were pushing SPARC, MIPS, and Alpha, customers considering a switch to RISC had to weigh superior performance against the uncomfortable fact that each RISC architecture had exactly one supplier. x86 had two. That mattered more than clock speeds.

Contrast all of this with the Z8000, which was essentially Zilog-only. Or the 88000, which was Motorola-only. Single-source processors carry existential risk for every product that uses them. Purchasing managers know this even when engineers don't.

2. Ecosystem and Toolchain Maturity

A processor without a mature toolchain is a science project. A processor with assemblers, compilers, debuggers, reference designs, application notes, textbooks, and a community of experienced engineers is an ecosystem.

The Z80 ecosystem by the mid-1980s was staggering. There were books (Rodnay Zaks' Programming the Z80, Barden's handbook, Ciarcia's build guide, Coffron's applications manual) available at any technical bookstore. There were assemblers, C compilers, BASIC interpreters, and Forth systems. There were thousands of CP/M applications. There were magazines publishing Z80 projects monthly. There were university courses teaching Z80 assembly. Every year, this ecosystem grew, and every year, the cost of switching to a different processor increased.

The 6502 had a similar ecosystem, driven heavily by the Apple II and Commodore 64 communities. The 8051 accumulated the largest ecosystem of any microcontroller family, with Keil (now ARM), IAR, SDCC, and many other toolchains providing development environments across every host platform.

When I wrote about how we learned hardware in 1983, I was documenting a snapshot of this ecosystem at its peak. Those books, those reference designs, those shared conventions: they weren't just educational resources. They were infrastructure. And infrastructure, once built, resists replacement.

3. ISA Simplicity and Predictability

There's a counterintuitive truth about instruction set architecture: the "best" ISA often isn't the one that survives. The one that survives is the one that's simple enough to implement cheaply, predictable enough to verify thoroughly, and small enough to teach in a semester.

The Z80's instruction set is large by 8-bit standards, with 158 base instructions and variants pushing toward 700 when you count all the addressing modes. But the fundamental execution model is simple: fetch an instruction, decode it, execute it. No pipeline. No branch prediction. No speculative execution. No out-of-order dispatch. The behavior is deterministic. If you clock the Z80 at 4 MHz, you can calculate exactly how many T-states each instruction takes and predict your program's execution time down to the microsecond.

This determinism is extraordinarily valuable in embedded systems. When you're designing an engine controller or a medical device, you need to know (not estimate, know) that your interrupt handler will complete within a specific time window. Pipelined processors with branch prediction make this analysis much harder. Simple processors make it trivial.

The 6502 takes this even further. With only 56 instructions and 13 addressing modes, the entire ISA fits on a single reference card. You can hold the complete instruction set in your head. This isn't a limitation; it's a feature. Engineers who can reason about every instruction their processor executes build more reliable systems than engineers who rely on abstractions they don't fully understand.

The 8051 instruction set is similarly compact: 111 instructions, most executing in one or two machine cycles. The architecture includes bit-addressable memory, a feature that seems quirky until you're writing firmware for a device with dozens of individual control signals, at which point it becomes indispensable.

4. Power, Size, and Cost

The survivors share a common economic profile: they're cheap to manufacture, cheap to buy, and cheap to power.

A Z84C00 in CMOS draws microwatts in standby. A W65C02S runs on a coin cell battery for years. An 8051 derivative can be manufactured on mature process nodes that have been paid for decades ago, with die sizes so small that the packaging costs more than the silicon. When your processor costs \$0.50 in volume and runs on the leakage current of a lithium cell, the engineering case for replacing it with something faster but more expensive becomes very hard to make.

This is where processor longevity intersects with the economics I've written about in the Jevons Paradox series. The relevant cost isn't just the chip; it's the total cost of the design: the processor, the toolchain, the engineering time, the qualification testing, the regulatory certification, and the opportunity cost of a redesign. A \$0.50 Z80 clone in a proven design with ten years of field data is almost impossible to displace, even if a \$0.30 ARM Cortex-M0 is technically superior, because the redesign and requalification costs dwarf the per-unit savings.

5. Inertia and Institutional Knowledge

The final factor is the hardest to quantify and the most powerful: institutional inertia.

Somewhere in Germany, there's a factory running a production line controlled by Z80-based PLCs installed in 1988. The line produces automotive components. It runs 24/7. It works. The engineer who designed the control system retired fifteen years ago. The firmware was written in Z80 assembly and documented in a binder that lives in a filing cabinet near the line.

Replacing this system would require: reverse-engineering the existing firmware (the original source code may or may not still exist), designing a new control system, writing new firmware, testing it against every production scenario the old system handles, qualifying the new system for automotive safety standards, scheduling downtime for installation, and training operators on the new system. The cost runs into hundreds of thousands of dollars. The risk is non-trivial; any bug could halt production.

So they order more Z80s. And the Z80 stays in production for another year.

Multiply this scenario by thousands of factories, millions of installed devices, and billions of lines of proven firmware, and you begin to understand why some processors simply cannot die. The cost of replacing them exceeds the cost of maintaining them, indefinitely.

This is also why the TI-84+ still uses a Z80. Texas Instruments has decades of TI-BASIC software, decades of teacher training materials, decades of standardized test approvals, and a user base that expects backward compatibility with programs written in 2004. The Z80 isn't the best processor for a modern calculator. But replacing it would require replacing everything else, and "everything else" is where the real value lives.

The Newcomen Pattern

There's a historical analogy I keep returning to. Thomas Newcomen built his atmospheric steam engine in 1712. It was inefficient, converting roughly 1% of the heat energy in coal into useful work. James Watt's improved design, introduced in the 1760s, was dramatically better: separate condenser, double-acting cylinder, and eventually five times the thermal efficiency. By any rational engineering measure, the Newcomen engine should have vanished overnight.

It didn't. Newcomen engines continued to be built and operated for decades after Watt's design was available. In some mining operations, they remained in service into the 19th century. The reasons were the same ones that keep Z80s in factories today: the existing engines worked, the operators knew how to maintain them, the replacement cost was high, and the performance of the old engine was adequate for the task.

"Adequate for the task" is the phrase that explains processor longevity better than any technical specification. The Z80 is adequate for a graphing calculator. The 6502 is adequate for a simple embedded controller. The 8051 is adequate for a washing machine. And "adequate" plus "proven" plus "cheap" plus "available from multiple sources" is a combination that "superior but new and unfamiliar" almost never beats.

The Numbers Tell the Story

It's worth pausing to appreciate the sheer scale of the survivors' deployment.

The 8051 family has been manufactured in quantities estimated at over 10 billion units. That's not a typo. Ten billion. More 8051 derivatives have been produced than any other processor architecture in history, including x86. They're in your car; a modern automobile contains dozens of microcontrollers, many of them 8051 variants, handling everything from window controls to tire pressure monitoring. They're in your thermostat, your microwave, your garage door opener.

The Z80 and its clones have shipped in quantities that are harder to pin down precisely, but conservative estimates exceed a billion units across all manufacturers and derivatives. The 6502 family, counting all variants from the original through the 65C816 that powered the Apple IIGS and the Super Nintendo, is in a similar range.

The 68000 family took a different path: fewer total units but higher-value applications. Where the 8051 went wide and cheap, the 68k went deep and capable. It dominated the workstation market before RISC architectures displaced it, then settled into a long career in automotive and industrial control. NXP's ColdFire and subsequent QorIQ Layerscape processors carry DNA that traces back to the original 68000. The architecture didn't die; it evolved.

What's remarkable about these numbers is that they continue to grow. These aren't static installed bases slowly decaying as old equipment is retired. New products are still being designed with 8051 cores. New Z80-compatible processors are still being fabricated; even after Littelfuse discontinued the original Z84C00 in 2024, third-party clones and the eZ80 keep the instruction set alive. When I built a dual Z80 RetroShield, I ordered Z84C0020PEC chips that were still in stock from the final production runs. A 1976 design, manufactured nearly half a century later. And the fact that Zilog's discontinuation made international headlines tells you everything about how deeply embedded these chips remain. You don't mourn a processor nobody uses.

What This Means for Modern Processors

The ARM Cortex-M0, introduced in 2009, is arguably the first modern processor that has a plausible shot at matching the longevity of the 8-bit survivors. It's licensable (like the 8051), simple (like the 6502), power-efficient (like the Z84C00), and backed by an ecosystem that's growing rapidly. ARM's licensing model (selling the design, not the chip) mirrors the model that made the 8051 ubiquitous.

RISC-V, as an open ISA, goes even further. No licensing fees, no single company that can discontinue the architecture, no vendor lock-in. I've reviewed RISC-V boards and watched the ecosystem grow. If any modern ISA is positioned to last fifty years, it's RISC-V, not because it's the best architecture, but because it's the hardest to kill.

But here's the uncomfortable truth for anyone designing a new processor architecture: the window for establishing a forty-year processor is probably closed. The Z80, 6502, 68000, and 8051 all emerged during a period when the microprocessor market was being established. There were no entrenched incumbents. Every design win was greenfield. Every new application (calculators, arcade cabinets, industrial controllers, medical devices) was being designed for the first time with microprocessors.

That era is over. Every new design now competes against an installed base. Every new ISA competes against ARM's ecosystem. The switching costs that keep forty-year-old processors alive are the same switching costs that prevent new architectures from gaining traction. The moat works in both directions.

The Lesson

The processors that last aren't the ones that push the performance envelope. They're the ones that solve a problem well enough, cheaply enough, reliably enough, and from enough sources that replacing them is never worth the trouble. Technical excellence is necessary but not sufficient. What matters more is the web of dependencies (the toolchains, the trained engineers, the certified designs, the proven firmware, the institutional knowledge) that accumulates around a processor over decades.

The Z80 will outlive many of the engineers reading this, not because it's a great processor, but because it's woven into the fabric of systems that nobody has a compelling reason to redesign. The 8051 will outlive the Z80, because it's woven into even more systems. And somewhere in a high school classroom, a student is pressing buttons on a TI-84+ that runs on a fifty-year-old instruction set, completely unaware that the chip executing their quadratic formula has been doing this job since before their grandparents started dating.

That's longevity. Not the kind you engineer. The kind that happens when everything around the chip conspires to keep it in place.