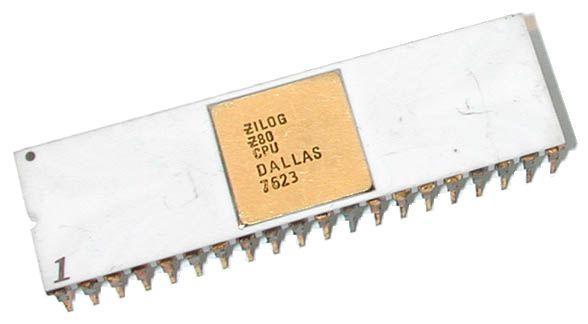

I asked Claude to build a Z80 emulator. The constraint was explicit: no reference to existing emulator source code. The inputs were the Zilog Z80 CPU User Manual, an architectural plan I wrote, and the test ROMs to validate against. Claude produced 1,300 lines of C covering every official Z80 instruction, undocumented flag behaviors, ACIA serial emulation, and CP/M support. It passed 117 unit tests. It boots CP/M and runs programs.

The emulator works. The question is what it means that it exists.

The Clean Room That Wasn't

"Clean room" is a legal term borrowed from semiconductor fabrication. In software, it describes a methodology where developers build from specifications and documentation without ever examining existing implementations. The purpose is to produce code that is legally independent of prior art. If you've never seen the original code, you can't have copied it.

The clean-room process was designed for human cognition. A developer reads a specification, forms a mental model, and writes code that implements the behavior the specification describes. The legal fiction is that the developer's mental model is informed solely by the specification, not by any existing implementation. In practice, developers have seen other implementations, read blog posts, studied textbook examples. The clean room is a discipline, not a guarantee: you follow the process, document that you followed it, and hope that's sufficient if someone challenges you.

When Claude writes a Z80 emulator from the Zilog manual, the clean-room concept doesn't dissolve because the AI is better at following the rules. It dissolves because the framework doesn't apply. Claude's training data includes dozens of Z80 emulators. The model has seen MAME's Z80 core, it has seen Fuse, it has seen whatever antirez published. The question of whether a specific output is "derived from" a specific input is unanswerable, because the model's internal state isn't decomposable into "I learned this from source A" and "I learned this from source B." The provenance that clean-room law requires you to demonstrate doesn't exist in a form that can be demonstrated.

But here's what's interesting: the emulator I directed Claude to produce is not a copy of any specific emulator. The architecture is mine. The bit-field decoding strategy (x/y/z/p/q decomposition of opcode bytes) was specified in my architectural plan. The test suite structure, the ACIA emulation interface, the system emulator's callback design: all specified by me and implemented by Claude from those specifications plus the Zilog manual. The output is an original assembly of knowledge. It's also an output of a system that has seen the source code it was told not to reference.

The law has no category for this. It's not a copy. It's not independent. It's something else.

The Language That Doesn't Exist

The Z80 case is complicated by the fact that prior implementations exist. Somebody could, in theory, diff my emulator against MAME's and look for structural similarities. (They won't find meaningful ones, because the architecture is different, but the argument could be made.) The more interesting case eliminates this possibility entirely.

Lattice is a programming language I designed. It has a novel feature called the phase system: mutability is not a static attribute but a runtime property that values transition through, like matter moving between liquid and solid. You declare a value in flux (mutable), freeze it to fix (immutable), thaw it back if needed. The language has forge blocks for controlled mutation zones. None of this exists in any other language.

Claude writes Lattice code. It writes it well. It produces correct programs using the phase system, the concurrency primitives, and the bytecode VM's 100-opcode instruction set. It does this despite the fact that Lattice does not appear in its training data. The language was designed after Claude's knowledge cutoff. There is no Lattice source code on GitHub, no Stack Overflow answers, no blog posts (other than mine) explaining the syntax.

How does Claude write Lattice? Because Lattice's syntax looks like Rust. The curly braces, the type annotations, the pattern matching: Claude recognizes the structural similarity and maps its understanding of Rust-like languages onto the Lattice grammar. The phase-specific keywords (flux, fix, freeze, thaw, forge) are new, but they appear in contexts that are syntactically familiar. Claude doesn't need to have seen Lattice before. It needs to have seen languages that smell similar.

This is a fundamentally different kind of creation than what copyright law contemplates. Claude didn't copy Lattice code (none exists to copy). It didn't copy Rust code (Lattice isn't Rust). It transformed a grammar specification and a set of examples into working programs in a language that has no prior art. The specification became the implementation without passing through any intermediate step that could be called "copying."

Heidegger Saw This Coming

In 1954, Martin Heidegger published The Question Concerning Technology. His central argument: modern technology is not just a set of tools. It is a way of seeing the world. He called this way of seeing Enframing (Gestell): the tendency of modern technology to reveal everything as standing reserve (Bestand), raw material ordered into availability.

The example Heidegger used was a hydroelectric dam on the Rhine. The river is no longer a river in the way a bridge reveals it (something to cross, something to contemplate, something with its own presence). The dam reveals the river as a power source. The water is standing reserve: ordered, measured, extracted. The river hasn't changed physically. What changed is how technology frames it.

This is exactly what happens when Claude reads the Zilog Z80 CPU User Manual.

The manual is a specification: 332 pages of timing diagrams, instruction tables, register descriptions, and pin assignments. When a human developer reads it, the manual is a guide. The developer forms an understanding, makes design choices, writes code that reflects their interpretation of the specification. The manual and the implementation are connected through the developer's comprehension. The developer is present in the code in a way that matters both legally and philosophically.

When Claude reads the same manual, the specification becomes standing reserve. The timing diagrams are not studied; they are consumed. The instruction tables are not interpreted; they are transformed. The manual is raw material, ordered directly into code, the way the dam orders the river into electricity. There is no intermediate step of "understanding" in the human sense. There is a transformation from one representation (specification) to another (implementation), and the transformation is mechanical in a way that human interpretation is not.

This is what Heidegger meant by Enframing. Technology doesn't just use resources; it changes what counts as a resource. The Zilog manual was written as a reference for engineers. Enframing reveals it as raw material for code generation. The specification was always latently an implementation; the AI just makes the transformation explicit.

What Copyright Was Protecting

Copyright law protects "original works of authorship fixed in a tangible medium of expression." The key word is "original." A Z80 emulator is copyrightable because the programmer made creative choices in expressing the specification as code. Two programmers given the same Zilog manual will produce different emulators: different variable names, different control flow structures, different optimization strategies, different architectural decisions. The specification constrains the behavior. The expression is where the creativity lives.

This framework assumes that the gap between specification and implementation is where human creativity operates. The specification says "the ADD instruction sets the zero flag if the result is zero." A hundred programmers will write a hundred slightly different implementations of this behavior. Each is an original expression. Each is copyrightable.

What happens when the gap closes? When the transformation from specification to implementation becomes mechanical, when there is no creative gap for originality to occupy, what is left to protect?

Claude's Z80 emulator makes specific structural choices: the x/y/z/p/q bit-field decomposition, the callback-based system bus interface, the T-state tracking architecture. These choices came from my architectural plan, not from Claude's autonomous creativity. I specified the structure; Claude filled it in from the Zilog manual. The "creative choices" that copyright relies on were mine (the architecture) and the specification's (the behavior). Claude's contribution was the transformation between the two, and that transformation is closer to compilation than to authorship.

Lattice pushes this further. Claude writes programs in a language with no training data, from a grammar specification and examples I provided. The output is correct Lattice code. But who is the author? I designed the language. Claude learned it from my spec. The programs it produces are implementations of tasks I described. At no point did Claude exercise the kind of independent creative judgment that copyright assumes. It transformed a task description into code in a grammar it learned from me. The entire chain from specification to implementation is mechanical, even though the output looks exactly like something a human programmer would write.

The Dissolution

Clean-room reverse engineering was a legal ritual designed to prove that a human developer's mental model was not contaminated by existing code. The ritual made sense when the concern was human memory: a developer who has read source code might unconsciously reproduce it.

AI makes the ritual meaningless in two ways.

First, provenance is undemonstrable. You cannot prove that Claude's output is or isn't derived from a specific piece of training data, because the model's internal representations don't maintain source attribution. The clean-room question ("did the developer see the original code?") has no answerable equivalent for an LLM. The model has seen everything in its training data simultaneously. It cannot unsee selectively.

Second, the distinction between "specification" and "implementation" is collapsing. When the transformation between them is mechanical and instantaneous, the specification is the implementation in a meaningful sense. The Zilog manual contains the Z80 emulator the way an acorn contains an oak tree. The transformation from one to the other requires energy and process, but the information content is the same. Copyright protects the expression, but when the expression is a deterministic function of the specification, the creative contribution approaches zero.

This doesn't mean all AI-generated code is uncopyrightable. If I write a detailed architectural plan, direct Claude to implement it, review and revise the output, and make structural decisions throughout the process, the result reflects my creative choices expressed through an AI tool. The tool is more sophisticated than a compiler, but the relationship is similar: I made the design decisions; the tool translated them into a lower-level representation. The copyright, if it exists, is in my architectural choices, not in Claude's line-by-line implementation.

But if someone asks Claude to "write a Z80 emulator" with no architectural plan, no structural constraints, and no iterative review, and Claude produces a working emulator from its training data, who owns that code? Not the person who typed the prompt; they made no creative contribution beyond the request. Not Anthropic; they built the tool but didn't direct the output. Not the authors of the Z80 emulators in the training data; their code wasn't copied in any legally meaningful sense. The code exists in a copyright vacuum: produced by a process that doesn't have an author in the way the law requires.

Why This Matters Now

The velocity of AI-assisted code production is accelerating. Every developer using Claude, Copilot, or Cursor is producing code whose provenance is uncertain. The code works. It passes tests. It ships to production. And its relationship to the training data that informed it is, in a strict legal sense, unknown and unknowable.

The current legal frameworks (copyright, clean room, fair use) were designed for a world where code was written by humans who could testify about their creative process. "I read the specification. I designed the architecture. I wrote the code. I did not reference any existing implementation." This testimony is the foundation of clean-room defense. An LLM cannot provide it, and the human directing the LLM can only testify about their own contributions (the prompt, the architectural plan, the review), not about what the model drew from.

I took a CS ethics course as an undergraduate. The cases we studied (Compaq's clean-room reimplementation of the IBM PC BIOS, SCO's claim that Linux contained UNIX code, DeCSS and the DMCA's prohibition on circumventing copy protection) all assumed a human author whose creative process could be examined and whose sources could be traced. Every one of those cases would be decided differently if the defendant had said "I told an AI to implement the specification and it produced this code." The existing precedent doesn't apply, and the new precedent doesn't exist yet.

The Acorn and the Oak

Heidegger would say that the danger of Enframing is not that it's wrong but that it's totalizing. When technology reveals everything as standing reserve, we lose the ability to see things as they are. The river becomes only a power source. The specification becomes only raw material for code generation. The act of programming becomes only a transformation pipeline from input to output.

What gets lost is what the clean-room process was actually designed to protect: the space between specification and implementation where human understanding operates. That space is where a developer reads "the ADD instruction sets the zero flag if the result is zero" and decides how to express that in code. The decision is small. The creativity is modest. But it's real, and it's human, and it's the entire basis of software copyright.

AI doesn't eliminate that space. My Z80 emulator project included genuine creative decisions: the architecture, the test strategy, the system emulator design. Lattice exists because I designed a novel type system that no AI would have invented from existing languages. The creative space still exists for the people who operate at the design level.

But for the implementation level, for the transformation from "what this should do" to "code that does it," the space is closing. The specification is becoming the implementation. The acorn is becoming the oak without passing through the seasons of human comprehension. And the legal and philosophical frameworks we built for a world where that transformation required human creativity haven't caught up.

They will. The question is how much code ships before they do.